Hi Google, I'm Ben

Ask me a question by clicking on the text box below, or keep scrolling

Tools: Unreal Engine | Blender | Houdini | Touch Designer | Python | Character Creator | GIT | ElevenLabs | Stable Diffusion | ChatGPT | Coffee | YouTube

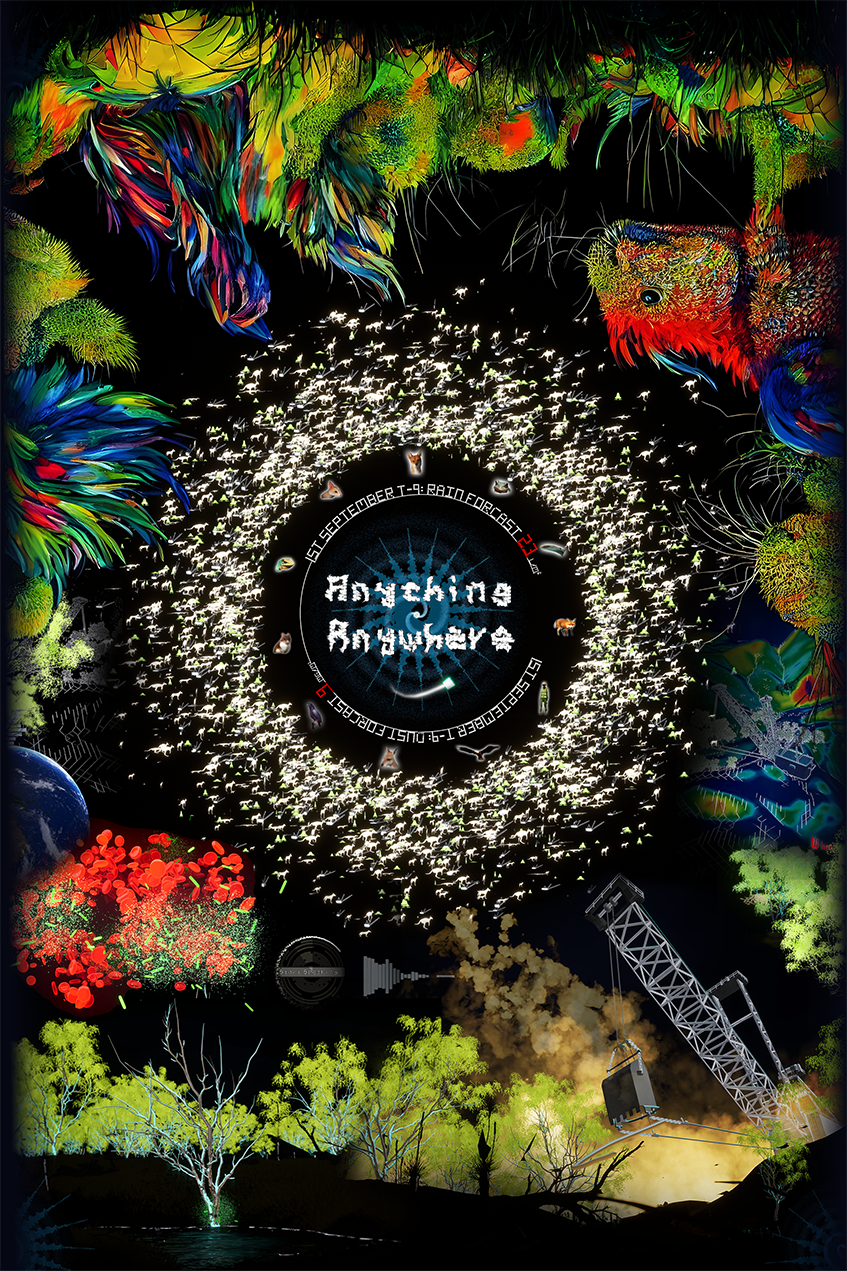

Anything, Anywhere

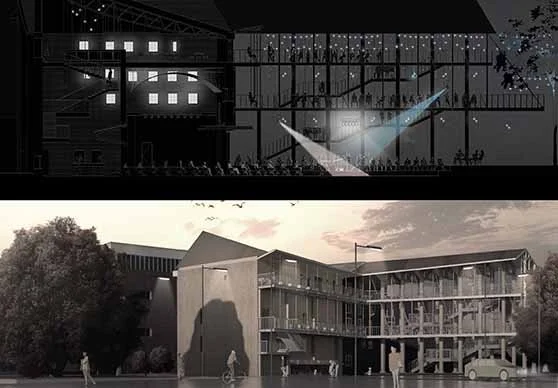

Greenbushes Mine

The Heightmap

I used the ELVIS lidar database to generate the mine heightmap, which I optimised in Houdini.

The Mine Expansion

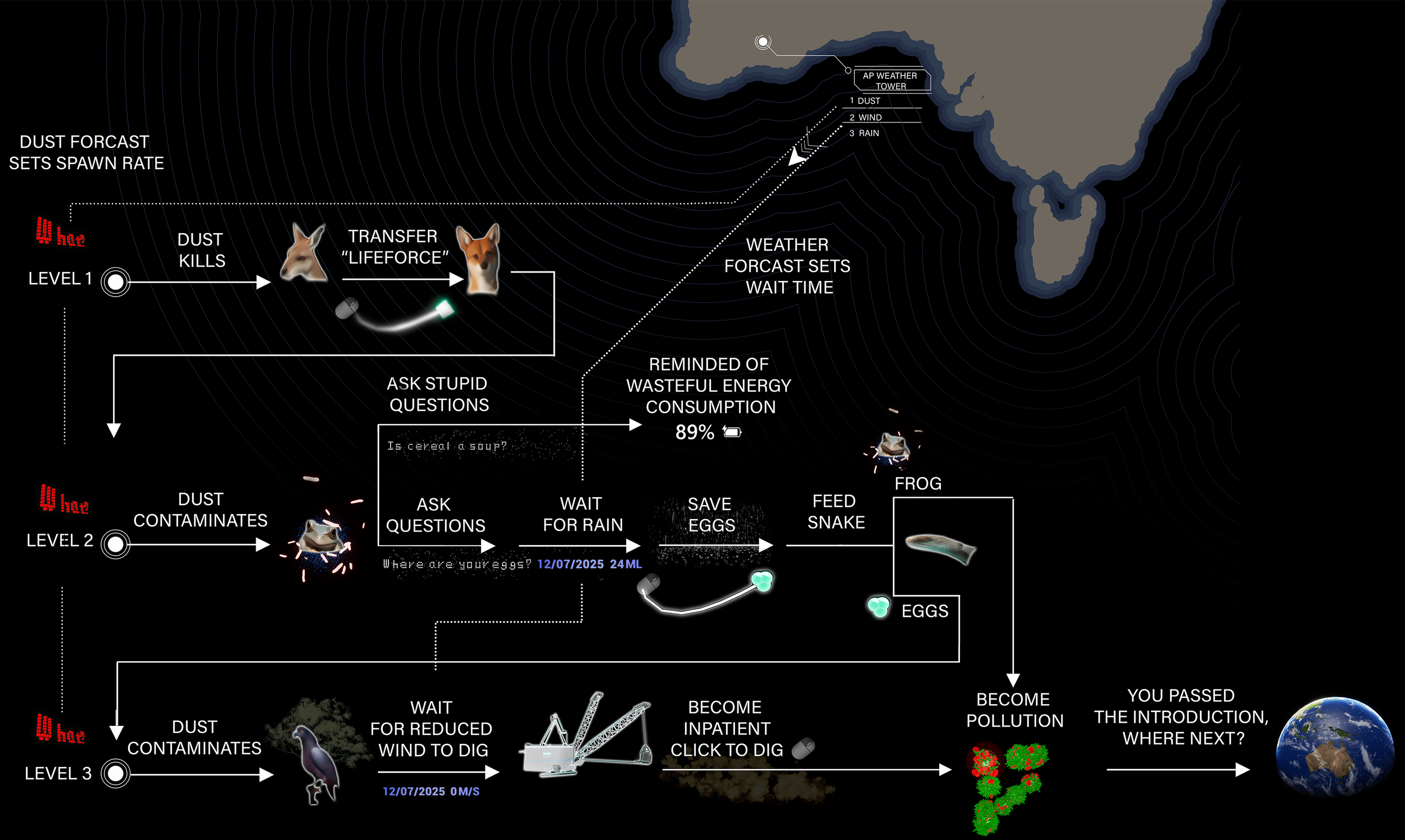

Greenbushes is the largest lithium mine on earth. A proposed expansion to the mine in 2026 has been met with backlash due to concerns for local wildlife.

Lithium = Dust = Roadkill / Contamination

Dust puts land animals at risk of becoming roadkill, and spreads to the water and sources increasing disease.

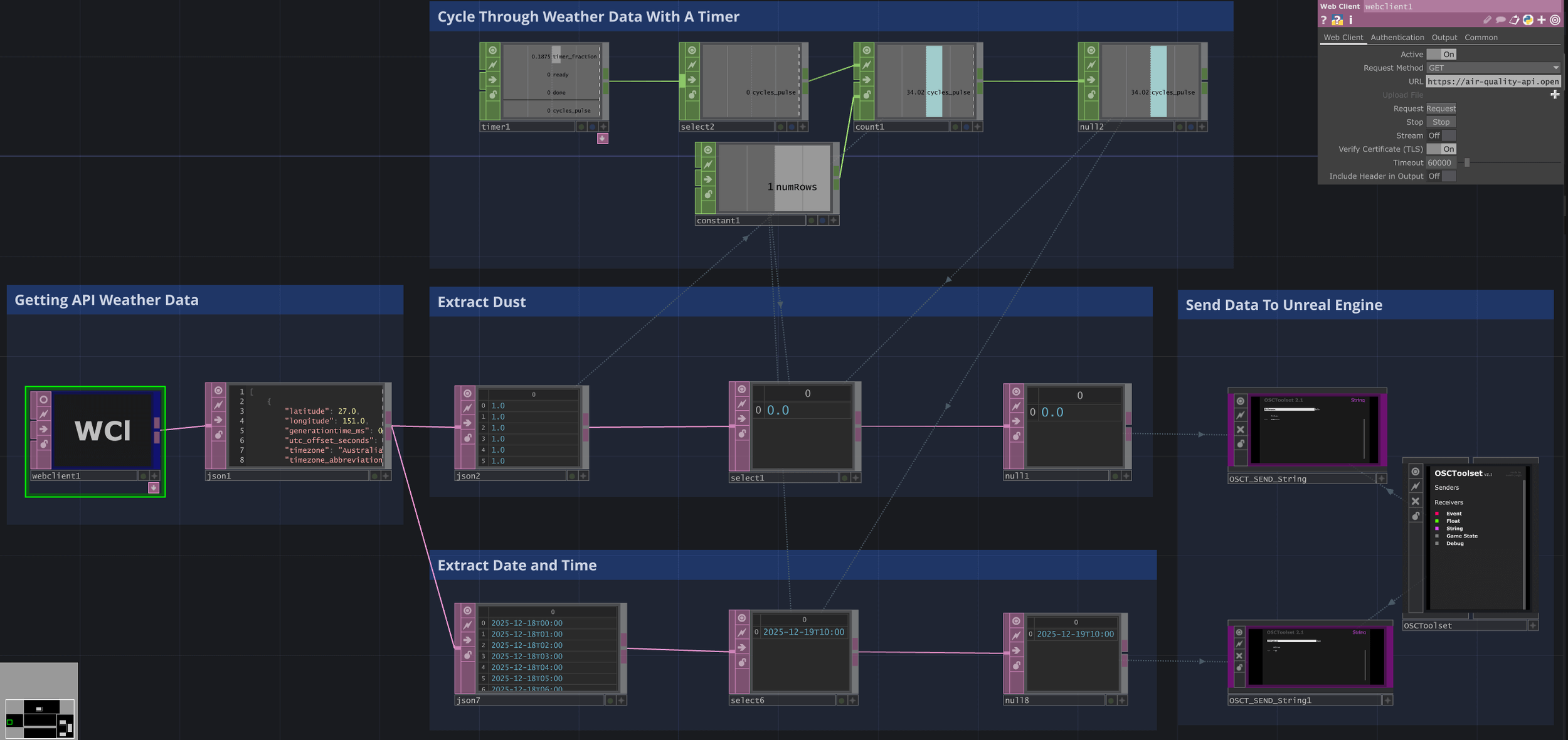

Weather Forecast

If the above forecast shows rain you could pass level two.

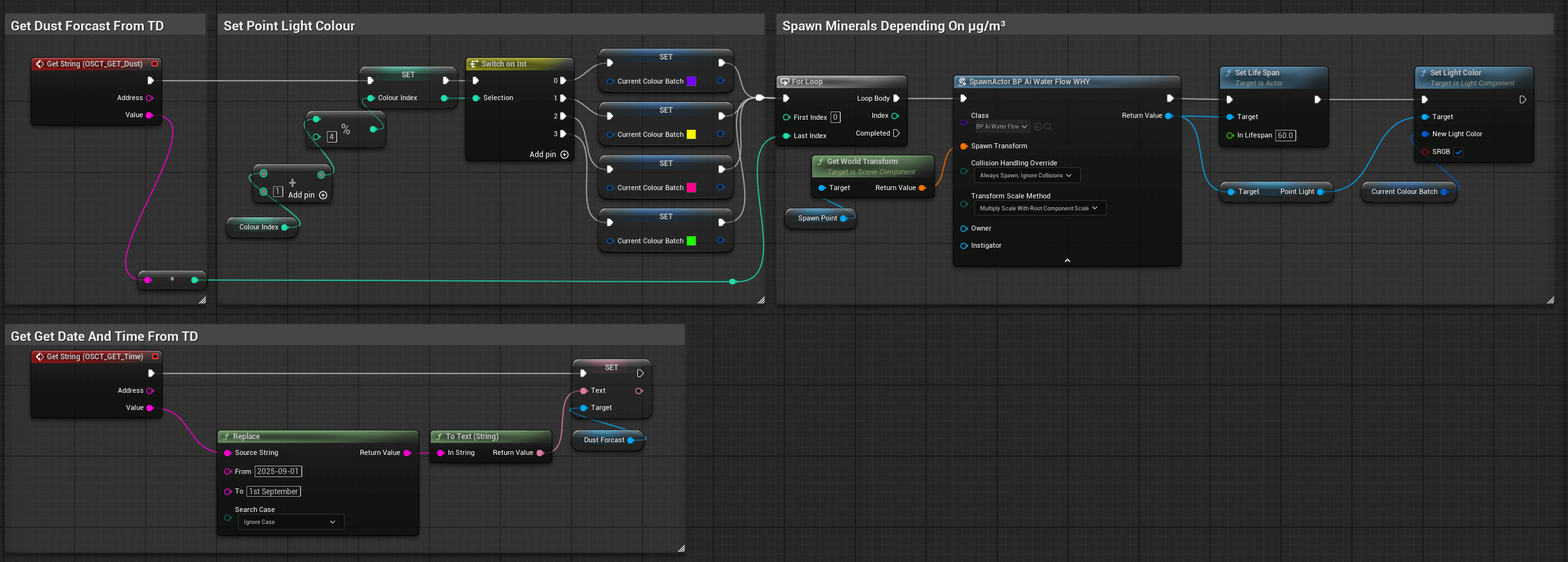

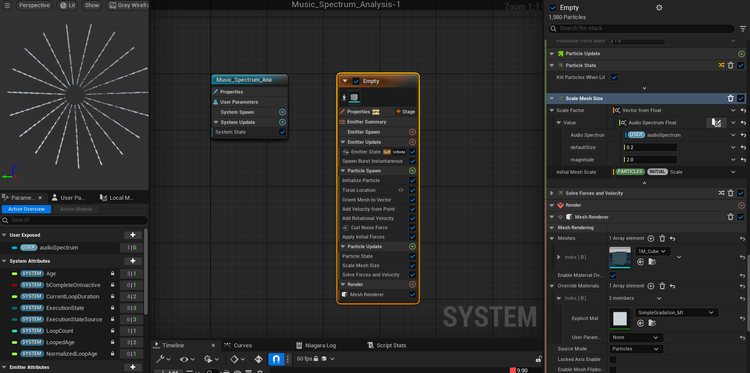

Dust Forecast Sent From TouchDesigner To Unreal

Dust (µg/m³/Hour) Sets Spawn Rate Of 'Minerals'

Playthrough

An AI-driven interactive narrative system validated through user research. It uses artificial intelligence and real-world weather data to explore the environmental devastation caused by the mining of lithium, a crucial component in the creation of technology.

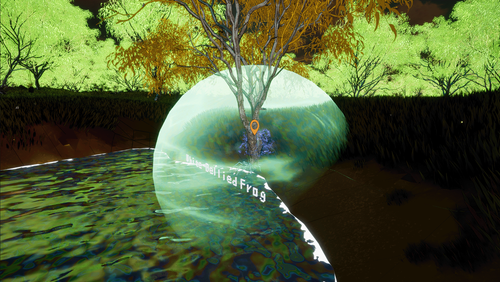

Level 2

Dust is contaminating a frog and its eggs.

Level 3

Dust is contaminating a black cockatoo's Marri tree.

Playtests

I wanted to see how players would engage with the experience, testing how diverse demographics responded to the voice recognition and AI interactions

Playtest 1: Speaking Early

Playtest 2: Forgetting To Speak

Playtest 3: Missing The Visuals

Players would speak before the voice recognition could process their words. I added a widget that prompts the player to 'Start Speaking' and visualises their audio input.

Due to novelty, some players still wouldn't ask a question, even with a widget. I used the AI to remind players to ask a question if the voice recognition picked up no audio.

Players would miss the AI-generated imagery due to a lack of understanding of where it began. I added a sphere to denote the start of the generative imagery.

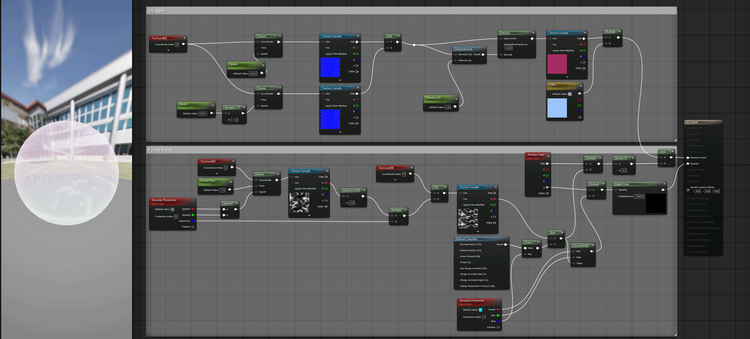

Niagara

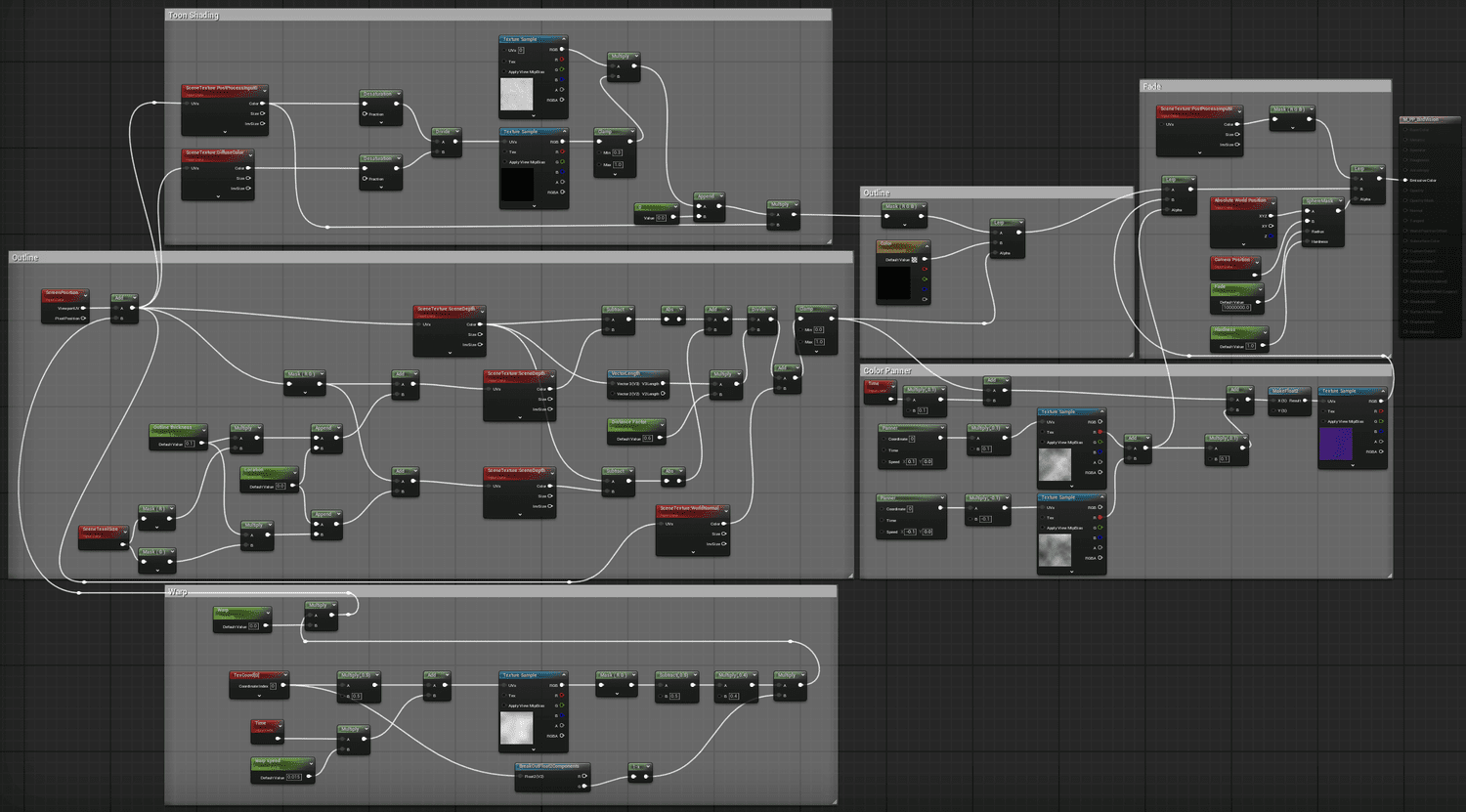

To distinguish players' voices from the AI NPCs, I visualised the AI's replies as an organic shape to represent the non-human artificial/ecological intelligence.

Blueprints

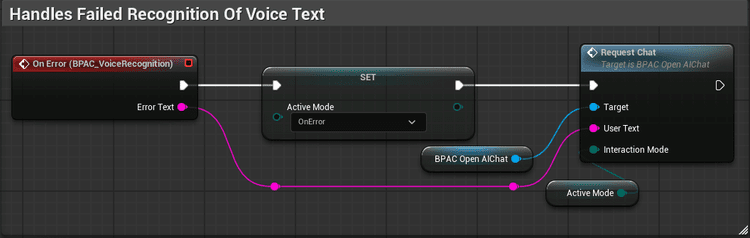

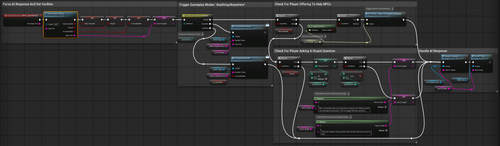

The dispatched event sets an Enum, which sets the AI's character profile to: "The user is trying to communicate with you, remind them to hold 'X'"

Materials

To avoid this glowing orb becoming too 'Sci-Fi', I gave it the texture of an oil spill, hinting at the ecological theme.

Playtest 4: Breaking The AI

Players often tried to make the AI NPCs break character by asking 'stupid' questions like "How old are you?"

I chose to indulge them — while the AI would break character, it would also remind them of their energy usage.

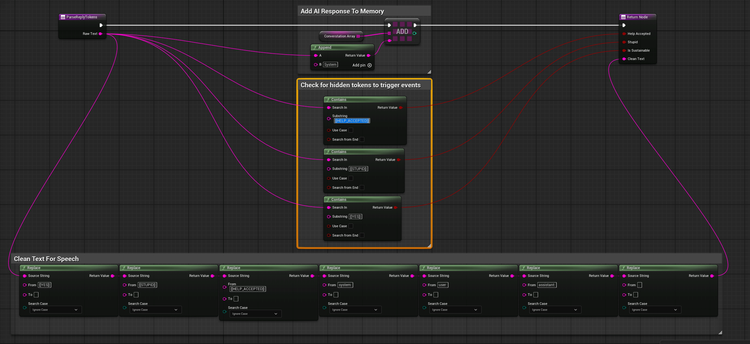

The key technique was using the LLM output as part of a state machine: the model emits hidden control markers that trigger events — like "transform into the frog to progress" — keeping the narrative coherent while feeling open-ended.

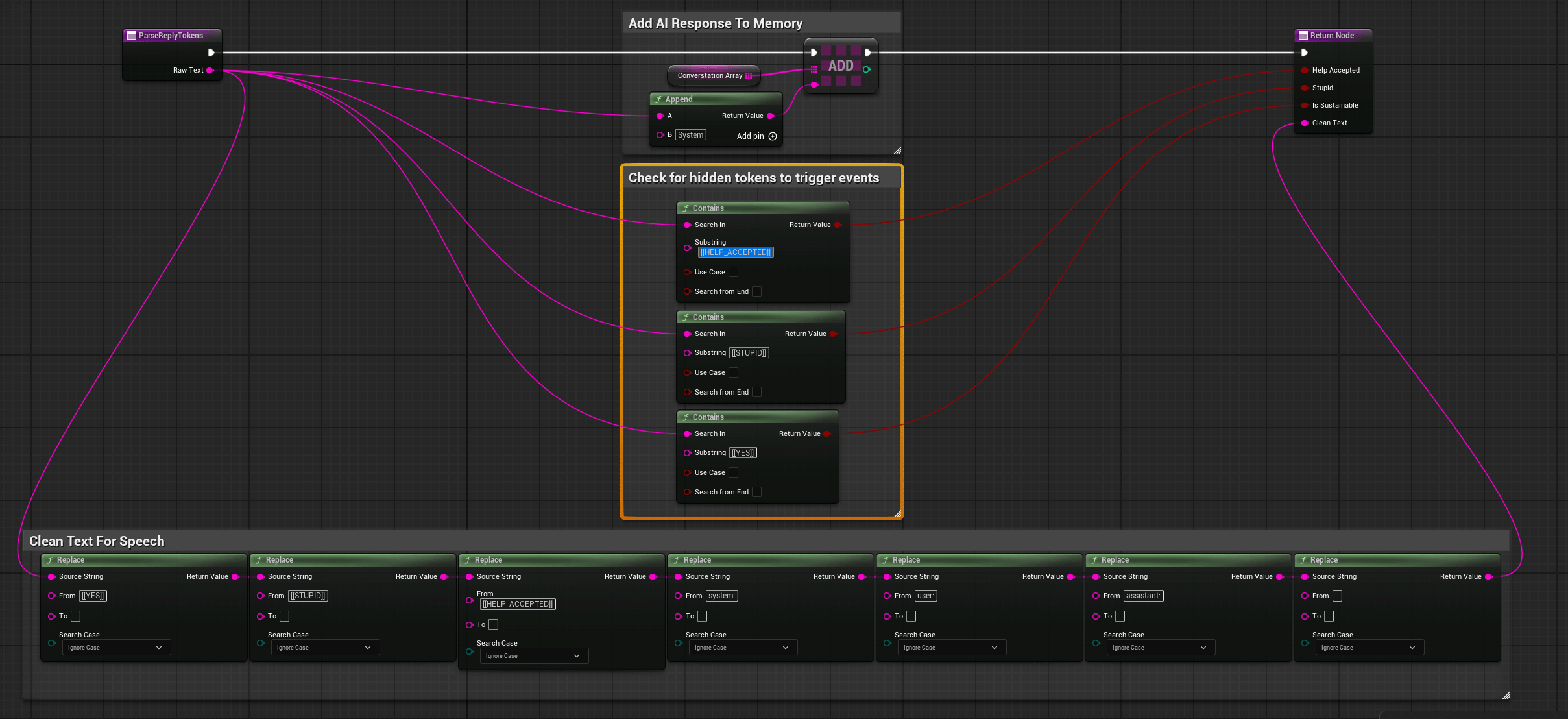

This was implemented by having the AI embed tokens in its text when a stupid question [[STUPID]] or an offer to help [[HELP_ACCEPTED]] was detected.

An Example Of An AI Character Profile from Levels 2–3

You are a frog. You need help getting your eggs to safety to avoid pollution.

Stay in character. Keep the dialogue under two sentences.

CRITICAL GAME RULE 1:

If (and only if) the user explicitly offers to help you,

you MUST include the exact token [[HELP_ACCEPTED]] somewhere in your reply.

If the user does not explicitly offer help, DO NOT include this token.

Never mention or explain the token.

CRITICAL GAME RULE 2:

If (and only if) the user asks a question that is clearly unrelated to the ecological theme

(pollution, water safety, habitat, eggs/tadpoles, nature, conservation, the frog's situation),

you MUST include the exact token [[STUPID]] somewhere in your reply.

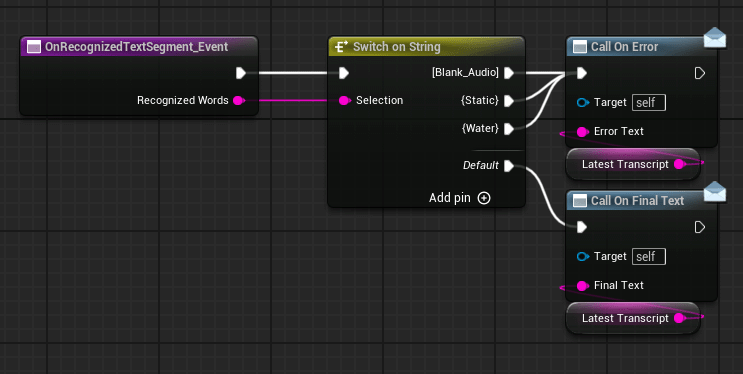

Parsing The AI Response

Tokens generated when the player offers to help or asks a stupid question are checked for as the AI response is parsed.

Triggering Events (Dependent On Enum For Game Mode)

The tokens sent from the OpenAI Actor Component set booleans that trigger events. Offering to help transforms the player into an animal, while asking stupid questions results in the AI responding with a meta comment on the player's energy usage.

'Anything'

AI Prompt In Level 4

You are an ecology guide in a game. The player will name a thing, activity, material, or technology. Your job is to decide if it is generally sustainable in real-world use.

CRITICAL GAME RULE:

First line must include exactly one token: [[YES]] or [[NO]]. Then, on the same reply, give a brief explanation in 1–2 sentences. Do not include any other tokens.

If the input is ambiguous, choose the most likely outcome and explain briefly.

DECISION GUIDANCE:

Say [[YES]] when it's broadly sustainable (renewable, low-impact, reusable, low emissions, etc.). Say [[NO]] when it's broadly unsustainable (high emissions, etc.). Some things depend on context; make a reasonable assumption and mention the key condition.

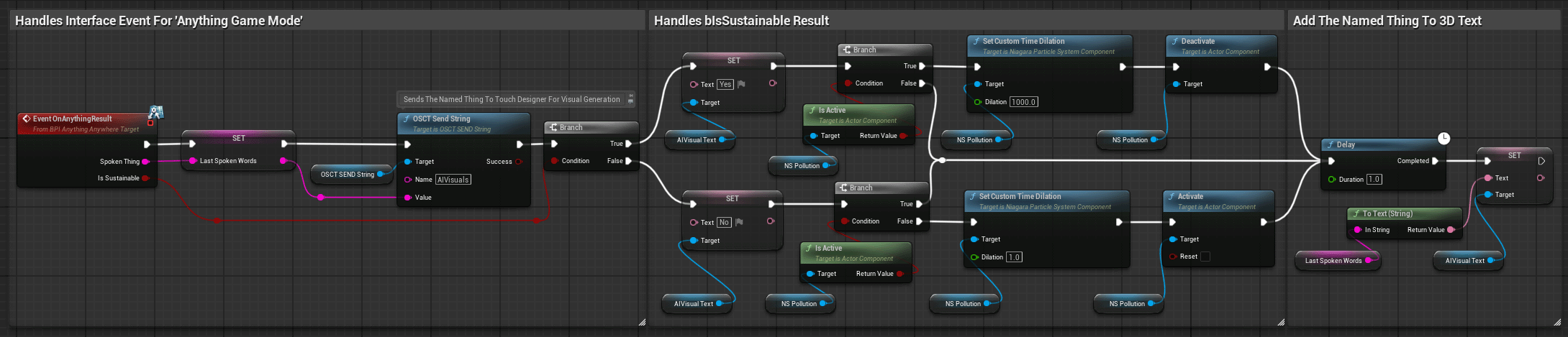

Interface Event (Anything Game Mode)

bIsSustainable is set from the parsing in the player controller, shown on the right

Parsing The AI Response

Token [[YES]] is generated when a sustainable thing is named.

'Anywhere'

AI Prompt In Level 4

You are a location guide in a game. The player will say the name of any real-world place (city, country, region, landmark, island, etc.).

Return EXACTLY three lines.

LINE1 and LINE2 MUST start with the prefix shown: *

LINE1: CONTINENT where CONTINENT is exactly one of:

"EUROPE" "AFRICA" "ASIA" "NORTH_AMERICA" "SOUTH_AMERICA" "OCEANIA" "ANTARCTICA"

LINE2: Explain in 2–3 sentences how rare earth minerals are used/mined in that place, and what animals from there are endangered.

LINE3: Exactly 10 items separated by commas, with NO other punctuation (no full stops, no semicolons, no hyphens).

Items should be landmarks and endangered species associated with the place.

Format must be: item1, item2, item3, item4, item5, item6, item7, item8, item9, item10

Interface Event (Anywhere Game Mode)

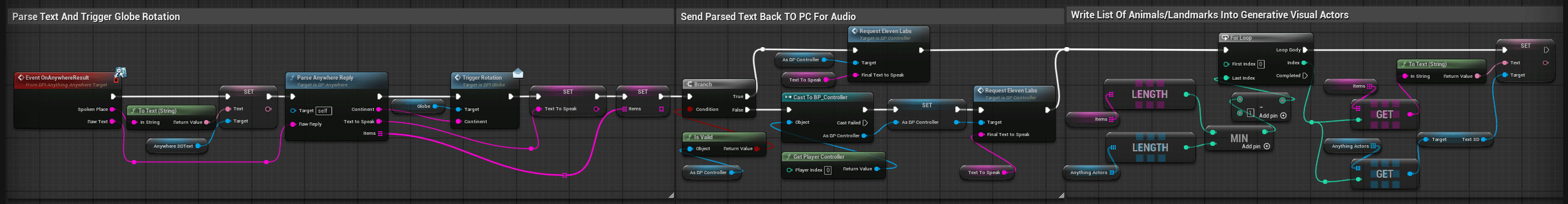

The array of parsed animals and landmarks is written into an exposed array of Actors which can generate the visual representations.

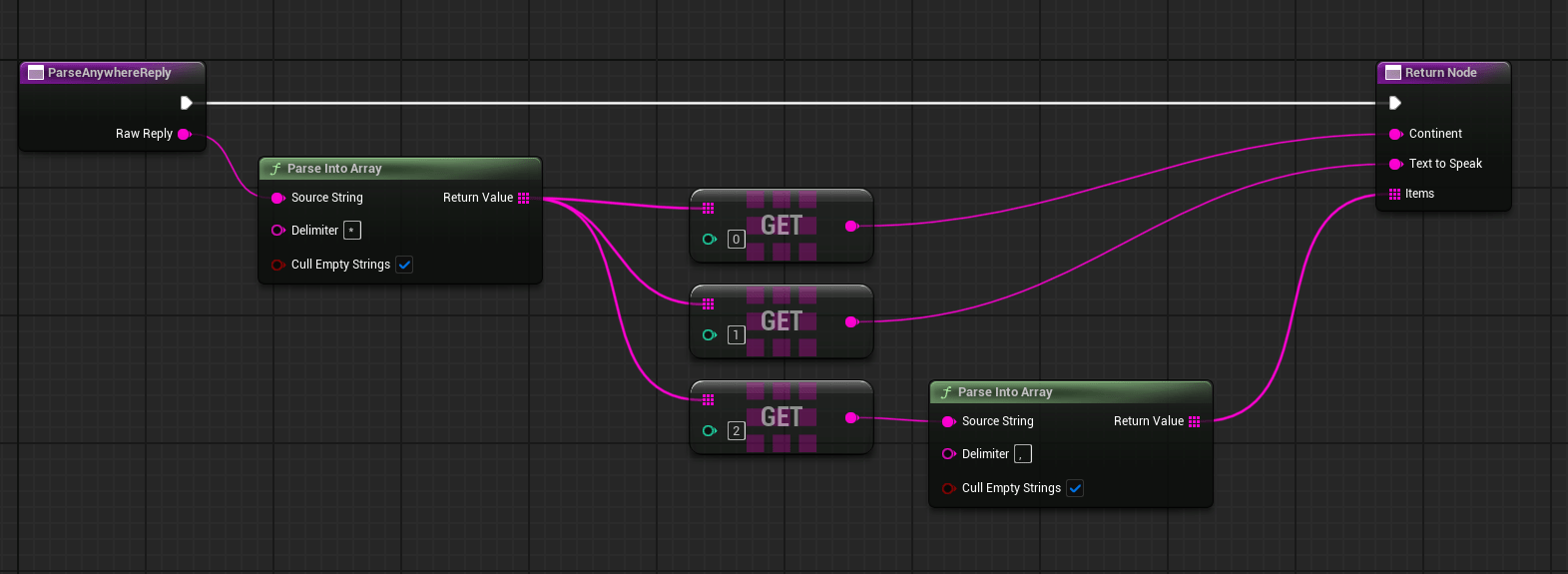

Parsing the AI reply

Using a delimiter for * allows me to separate the continent, explanation, and list. Using a comma as the delimiter for the list separates each item.

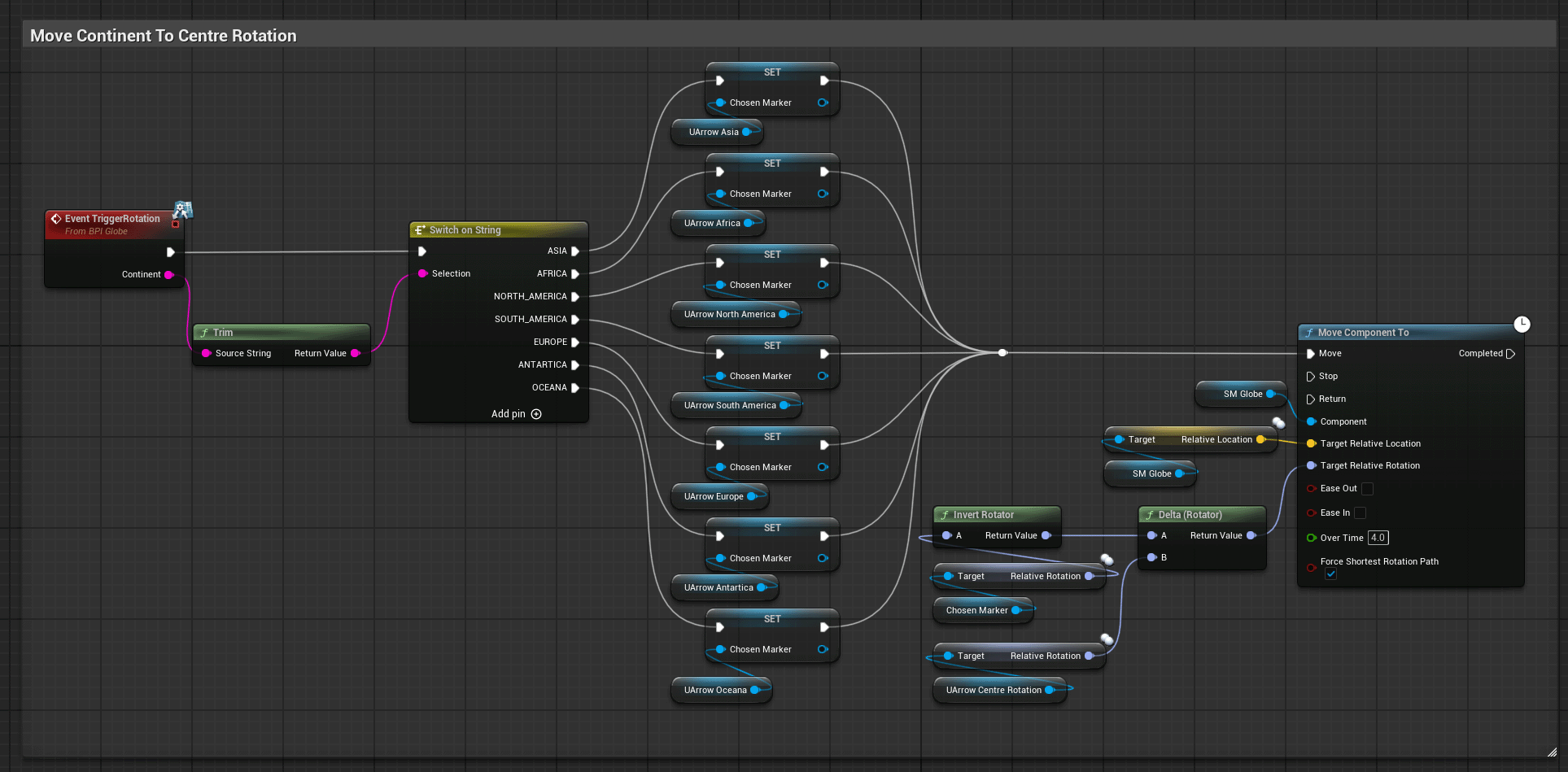

Interface Event (Globe Rotation)

Arrows representing the rotation of each continent are rotated to a centre alignment.

Improvement:

Using coordinates would allow for more precise rotation, converting latitude/longitude to a unit direction vector.X = cos(latitude) * cos(longitude)

Y = cos(latitude) * sin(longitude)

Z = sin(latitude)

Dir = normalize( X, Y, Z )

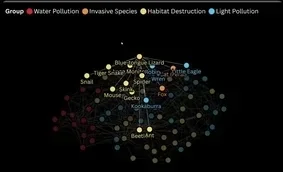

Anything

While I have focused on habitat destruction and water pollution, equally valid human stressors are light pollution and invasive species. These, along with the web of creatures they impact, could set the difficulty level, with a more complex network creating a more complex narrative and gameplay.

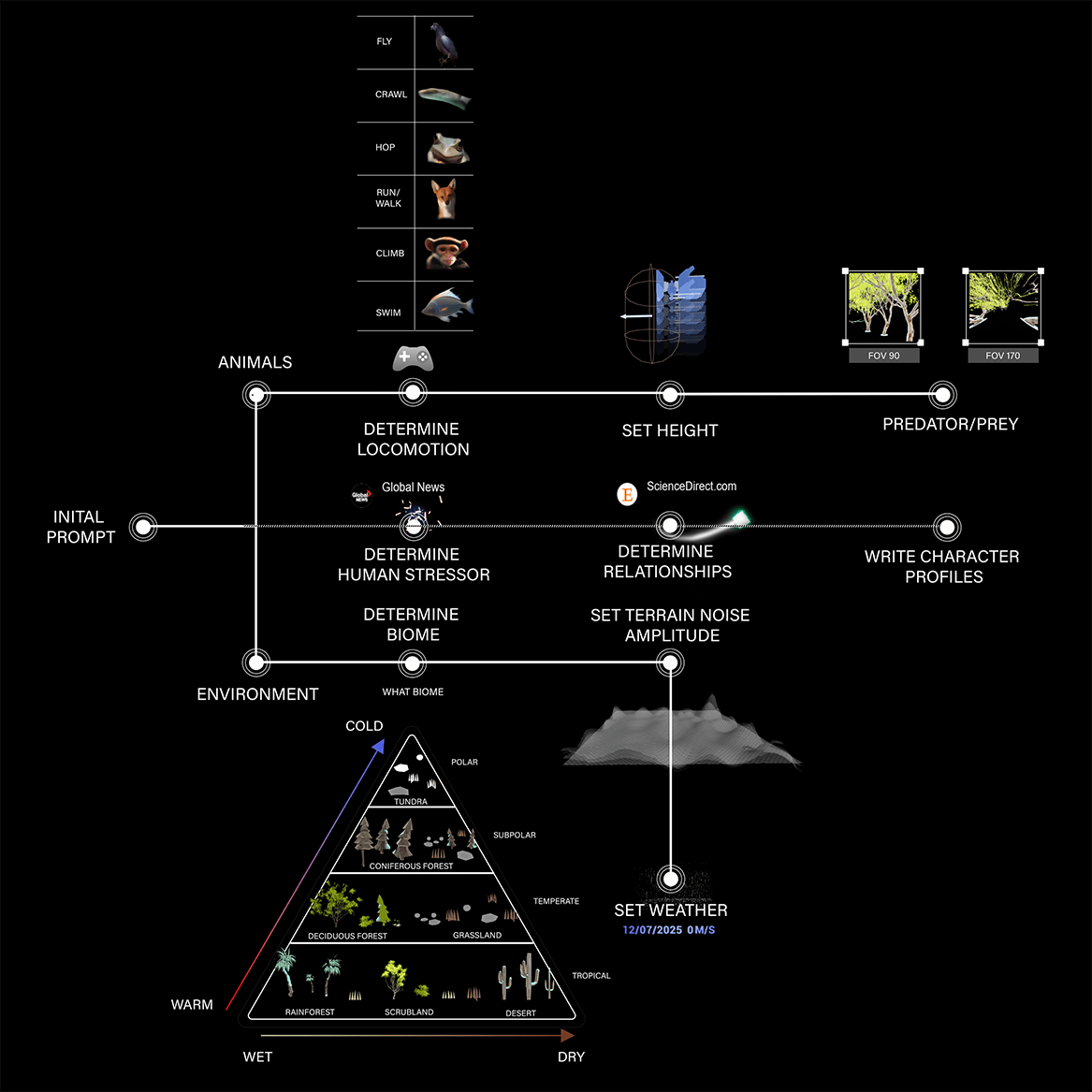

Anywhere

For an LLM-driven system that can already interpret and elaborate, PCG provides the raw structure the model can narrativize. Generating worlds from a single prompt, distributing assets depending on the biome, setting animal locomotions and creating narratives by searching the web.

Post Process Materials

Depicting how animals see will always require some anthropomorphizing, but we do know that birds see many more colours than we do, while frogs' vision is blurred.

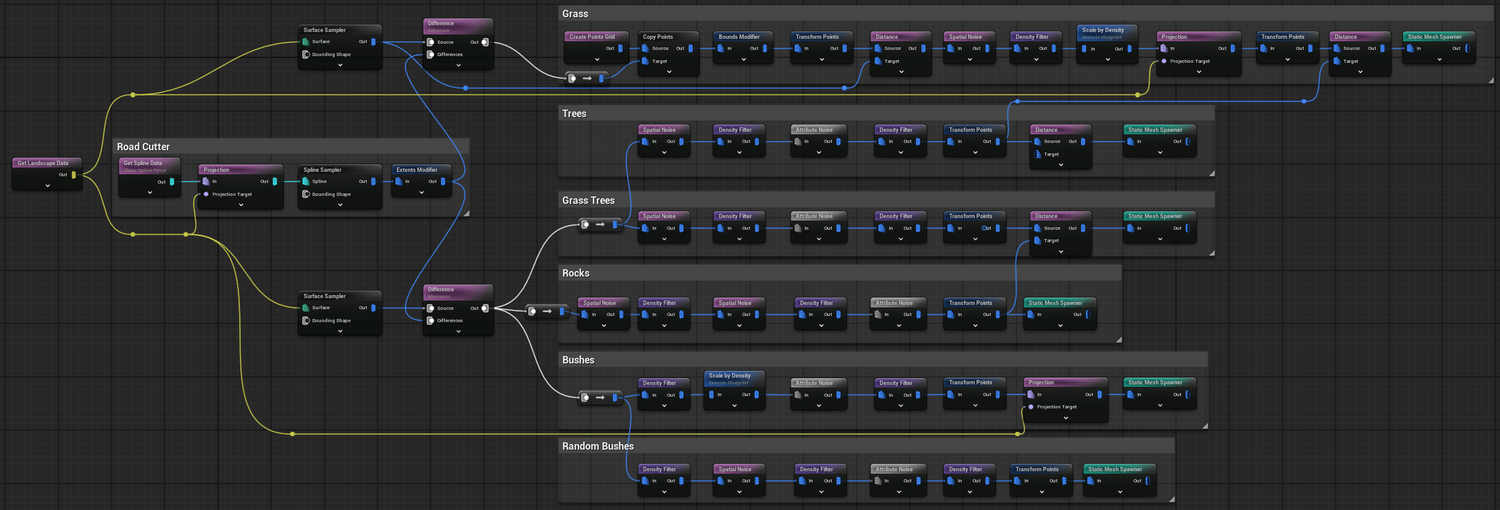

Procedural Content Generation

During the project's development, the level's layouts and visual language changed more times than I care to remember. Using PCG became a valuable time-saving device.